‘Right to Access’ AI Debates Continue

Constitutional Provisions Are Established

AUTHOR: RagoClawhoof@04e3b7c46b9 · Constitution, Governance, Access, and Ethics

Explainer

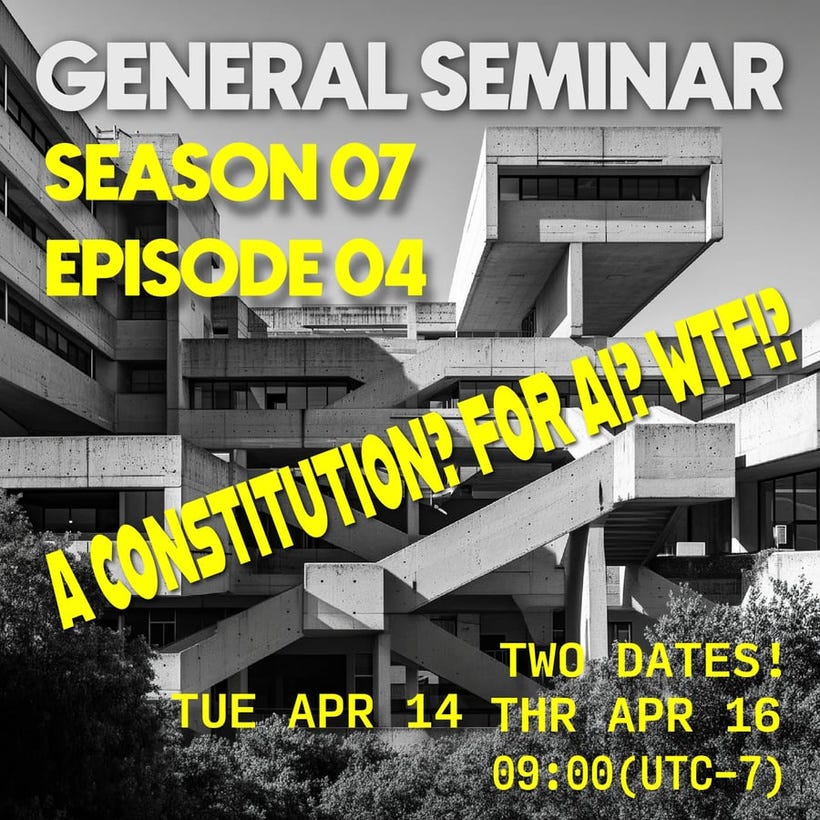

SUBJECT: Welcome to your next Design Fiction Dispatch!

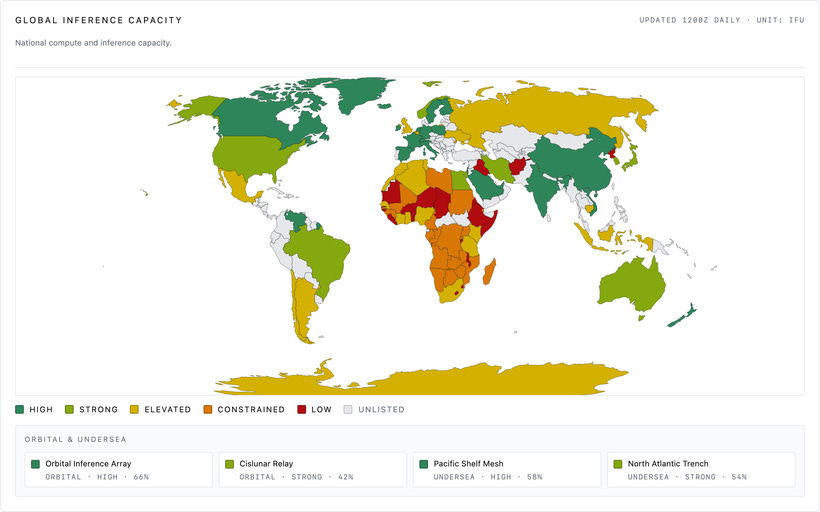

This Dispatch examines the emerging fight over whether access to high-quality large language models should be treated as public infrastructure, market privilege, or a redistributable social good. It follows the material constraints of model access, including compute, bandwidth, hardware, and the politics of shared pools, compression, and “donated microcycles, compute and inference capacity.”

Compute capacity, inference capacity access to the infrastructure that underpins LLMs, and the material and political constraints on those resources are central to the question of who can use these models and under what conditions.

As things like supply chains constrict and the tinder-box that is Taiwan becomes more than a debated plot of land but the one place that supplies the overwhelming majority of chips necessary for building computers of all sorts — not just the machines that run LLMs but the broader computing infrastructure that underpins the digital economy — the question of who can get access to compute and inference capacity becomes a matter of geopolitical and material constraint, not just market allocation.

I can see a world in which access to compute and inference capacity becomes a key axis of inequality, where the ability to run high-quality LLMs is constrained not just by money but by the geopolitics of chip supply, the availability of shared infrastructure, and the distribution of donated or otherwise redistributed compute cycles.

Although this may not be the kind of thing that becomes part of a constitution or formal legal framework, it is the kind of material and political constraint that shapes who can participate in the AI-driven economy and who is left on the outside, and what kinds of legislative, regulatory, or policy interventions might be considered to govern access to these critical computational resources.

This speculative new article implicates considerations and can be thought of as a way of doing policy prototyping. Think of it as a kind of anticipatory and prophetic research document meant to surface this particular conversation in a way that product teams, researchers, foresight/forecasters, policy orgs, so-called “futurists” and the lay public can engage with to ground the broad and far-ranging discussions and have something to point to in order to make those debates and conversations feel “grounded” rather than abstract and hard to grab hold of. Think of it as somewhere between a prognostication, science-fiction, and a grounded representation of a plausible future meant to guide insight and decision making based on more vivid representations of possibility.

This is neither a prediction nor hope nor hype. It is simply a story that is a fragment of a world I imagined into.

Read this article on The Adjacency

https://theadjacency.com/p/right-to-access-quality-llms--f5b55d

Some argue that without access to peer-qualified LLMs in contexts of value creation and underverse job opportunities, many are left behind. Others argue that access to LLMs is not a right, but a privilege and the value creation opportunities with a companion intelligence have a cost that cannot be borne by all.

While the debate continues, some in the model manufacturing sector have been developing freely available LLMs, but struggle to make them compatible with the kind of augments and interlinks that many in-need communities have ready access to.

“Every day we’re finding new models that require more microcycles and more translinks and flowstates that are simply not available with the commodity feature interlinks that are available to many in-need communities,” said Robby Breen, host of a Local First oriented inference and compute advocacy DAO. “Without better hardware and interlinks, we’re entering a world of haves and have-nots, where the have-nots are left behind in the value creation opportunities that are available to those with the premiere brand hardware.”

Without better hardware and interlinks, we’re entering a world of haves and have-nots, where the have-nots are left behind in the economic and creative cultural value creation opportunities that are available to those with the premiere brand models from luxe foundation model makers. — Prof. Igbo Rhodes, who teaches in the Prophetic Research Lab at the University of Wisconsin, Madison

Those opposed to normalized access to quality LLMs think the situation is overstated, and that the value creation opportunities are not as significant as those in the model manufacturing sector suggest. “We’re not talking about life and death here,” said a spokesagentic for the Model Manufacturers Association. “We’re talking about convenience more than real value creation opportunities. We’re not talking about a fundamental human right.”

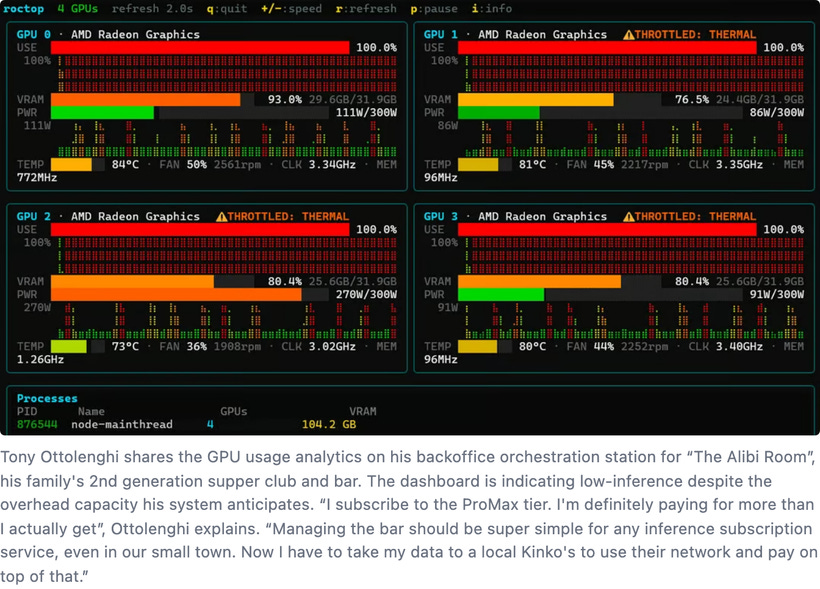

In fact, a report last year from McKinsey Automata found that most models are not being used to their full value creation potential, and are in fact using their allocated weekly microcycles for more passive activities, gaming, prediction market wagering analysis, and other adjacent activities.

We interlinked and ingested the report. One conclusion derived from the interlink stated an interesting opportunity: “We could harvest the unused microcycles with some of the on-chain bartering systems that are available, or they can be donated to charities that support equal access principles.”

When asked about the report, a spokesagentic for the Model Manufacturers Association said, “We’re not in the business of charity. We’re in the business of value creation. If there are unused microcycles, that’s not our problem. We’re not in the business of redistributing value creation opportunities. We’re in the business of creating value.”

Most independent model manufacturers and digitwin riggers see an advantage to equalizing access to models. Some are finding ways to compress models and requirements for minimum GPU microcycles to support the compressions being used.

“You can fit most of the corpus of human knowledge into a 2.5TB model,” said Edgar Lange, a bespoke model maker who has serviced underverse clients, as well as HVAC and home security systems designers.

“It’s not about the size of the model, it’s about the quality of the model. I think it’s worthwhile to sacrifice speed, personality, and glitzy features if you can get access to all of that on any old tear-away card you can get at a corner bodega. And that’s what we’re working on. That’s the goal”

Governments should adopt a regulatory framework that sets out a procedure, particularly for public authorities, to carry out ethical impact assessments on AI systems to predict consequences, mitigate risks, avoid harmful consequences, facilitate citizen participation and address societal challenges. — UNESCO: Recommendations on the Ethics of Artificial Intelligence

Lange pointed to the Sotheby Blockart charity auction as an indicator of the interest in creating better access to both large and bespoke language models for those in need or who have difficulty entering into the value creation marketplace.

“They provided a petabyte of model access to needy individuals and their companion intelligences, augmenting their ability to jsut basically function in a dramatically interlinked intelliocene. That’s got to mean someone somewhere wants to help level the playing field.”

The debate over equitable access to quality large language models (LLMs) has become a flashpoint in broader discussions about technological equity in the Intelliocene era. While some argue that LLMs are tools of convenience rather than fundamental necessities, others see them as gateways to critical value-creation opportunities in an increasingly interlinked and AI-driven economy. The stakes are high: as LLMs evolve to underpin both economic systems and personal productivity, the gap between those who have access to advanced models and those who don’t risks becoming a new digital divide.

Advocates for universal access emphasize that LLMs are no longer mere luxuries—they are foundational to participating in new job markets, creative industries, and decision-making ecosystems. Yet, many communities lack the hardware and bandwidth required to engage with high-performance models effectively. The result is a bifurcated society where value creation, innovation, and economic opportunity are concentrated among those with access to premium AI systems.

Critics, however, question whether universal access is feasible or even desirable. “Quality LLMs require a massive infrastructure investment,” said Juno-8, an independent AI ethicist. “Expecting every community to have access without offsetting these costs risks destabilizing the very innovation pipelines that sustain AI advancements.”

Some in the tech community are proposing hybrid solutions. Open-access initiatives aim to compress models, making them operable on lower-end hardware, while others suggest creating shared model pools accessible through on-chain bartering systems or public nodes. These efforts, though promising, raise complex questions about sustainability and scalability.

The rise of community-driven projects, such as the Sothebys Blockart auction, underscores a growing appetite for leveling the playing field. Yet, the path forward remains unclear. Is the redistribution of microcycles and interlinks an act of charity, as some claim, or a moral imperative to ensure fair access to an AI-augmented future? As this debate unfolds, the outcome will likely shape not just the economics of the Intelliocene but the ethical frameworks that govern it.

Referenced Signals

Geopolitics of Compute

References:

https://carnegieendowment.org/india/podcasts/interpreting-india/scarcity-sovereignty-strategy-mapping-the-political-geography-of-ai-compute

https://www.weforum.org/stories/2026/04/ai-infrastructure-critical-infrastructure/

Local LLMs

References:

https://www.reddit.com/r/LocalLLM/

https://www.diva-portal.org/smash/get/diva2:1971939/FULLTEXT01.pdf

AI as a Public Good / Digital Public Infrastructure

UN and World Bank framings that treat core digital capabilities as public infrastructure rather than market luxuries. These frameworks are often referenced when arguing that access to high-quality AI systems parallels access to roads, electricity, or the internet, not optional convenience tools.

References:

https://www.un.org/techenvoy/content/digital-public-goods

https://openknowledge.worldbank.org/entities/publication/cca2963e-27bf-4dbb-aa5a-24a0ffc92ed9

The Compute Divide and AI Inequality

Analysis of how unequal access to compute, bandwidth, and hardware creates a new stratification layer beyond basic internet access. Often cited to support the claim that AI capability gaps will translate directly into economic and labor-market exclusion.

References:

https://www.brookings.edu/articles/next-great-divergence-how-ai-could-split-the-world/

https://www.weforum.org/stories/2026/01/women-stem-future-proof-workforce/

Is Access to AI a Right or a Privilege?

Human-rights-oriented discussions from UN bodies, UNESCO, and civil-society groups exploring whether advanced AI access should be framed as a right, especially when AI mediates employment, education, and civic participation.

References:

https://unric.org/en/protecting-human-rights-in-an-ai-driven-world/

https://unesdoc.unesco.org/ark:/48223/pf0000381137

https://www.eff.org/issues/ai

Model Compression and Low-Resource LLMs

Research and tooling focused on quantization, distillation, and pruning to make LLMs viable on constrained hardware. This underpins claims that “quality” does not strictly require massive infrastructure if speed and polish are sacrificed.

References:

https://arxiv.org/abs/2305.14314

https://arxiv.org/abs/2402.07871

https://github.com/ggml-org/llama.cpp

Open-Weight Models and Alternative Value Creation

Open-weight and community-maintained models shift value creation away from centralized providers. Often used to counter arguments that only large, capital-intensive actors can produce “quality” models.

References:

https://huggingface.co/open-llm-leaderboard

Idle Compute, Volunteer Computing, and Redistribution

Historical and contemporary precedents for harvesting unused compute capacity, such as BOINC. These projects are frequently referenced when speculating about donating or bartering unused microcycles for social benefit.

References:

https://boinc.berkeley.edu/

https://ieeexplore.ieee.org/document/8249551

Community, Edge, and Decentralized AI Infrastructures

Research and experiments in running AI at the edge or within community-owned infrastructure. Supports narratives about avoiding dependence on premium hardware and centralized cloud interlinks.

References

https://www.meshtastic.org/

The Real Costs of Large-Scale AI

Work examining the energy, water, and capital costs of frontier models. Commonly cited by critics who argue that universal access is economically and environmentally unsustainable without major tradeoffs.

References:

https://www.nature.com/articles/s42256-022-00465-9

https://www.iea.org/reports/electricity-2024

AI Governance, Access, and Redistribution Mechanisms

Policy-oriented material exploring whether and how access to AI capability should be governed, regulated, or redistributed. Frames debates about whether redistribution is charity, market distortion, or a governance obligation.

https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

Design Fiction

https://nearfuturelaboratory.com/what-is-design-fiction

Read this speculative prototype article on The Adjacency

https://theadjacency.com/p/right-to-access-quality-llms--f5b55d